With our newly released Model Mode you can create deep and custom trained models, and combine them into workflow graphs using model operators. Read on to learn more.

Model Mode Overview

With the release of Model Mode in Portal you can now select, customize, train, and assemble your models from a growing selection of pre-trained model templates. You can then use these models as building blocks for more complex solutions. Just navigate to the "four squares" icon in Portal, and you can get started building custom models today.

Model Types

You can now search, filter, organize, and customize your models all in one place. Easily mix and match your own custom AI creations with highly optimized Clarifai Models. Combine cutting edge machine learning algorithms with fixed function model operators that connect, route and trigger behaviors in your workflows.

Build your solution with:

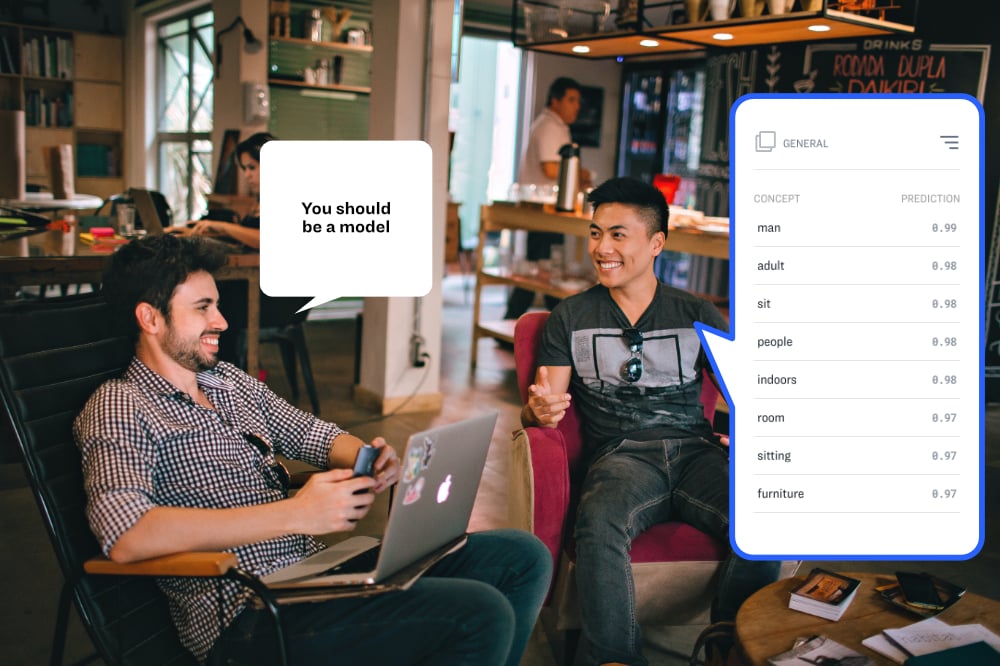

- Clarifai Models: Pre-built, ready-to-use image recognition and NLP models for the most common classification and detection tasks.

- Custom trained models: Extend the embedding context from a pre-trained model with your own custom concepts.

- Deep trained models: Recognize new visual features and push model accuracy to the limit with classification, detection and embedding templates.

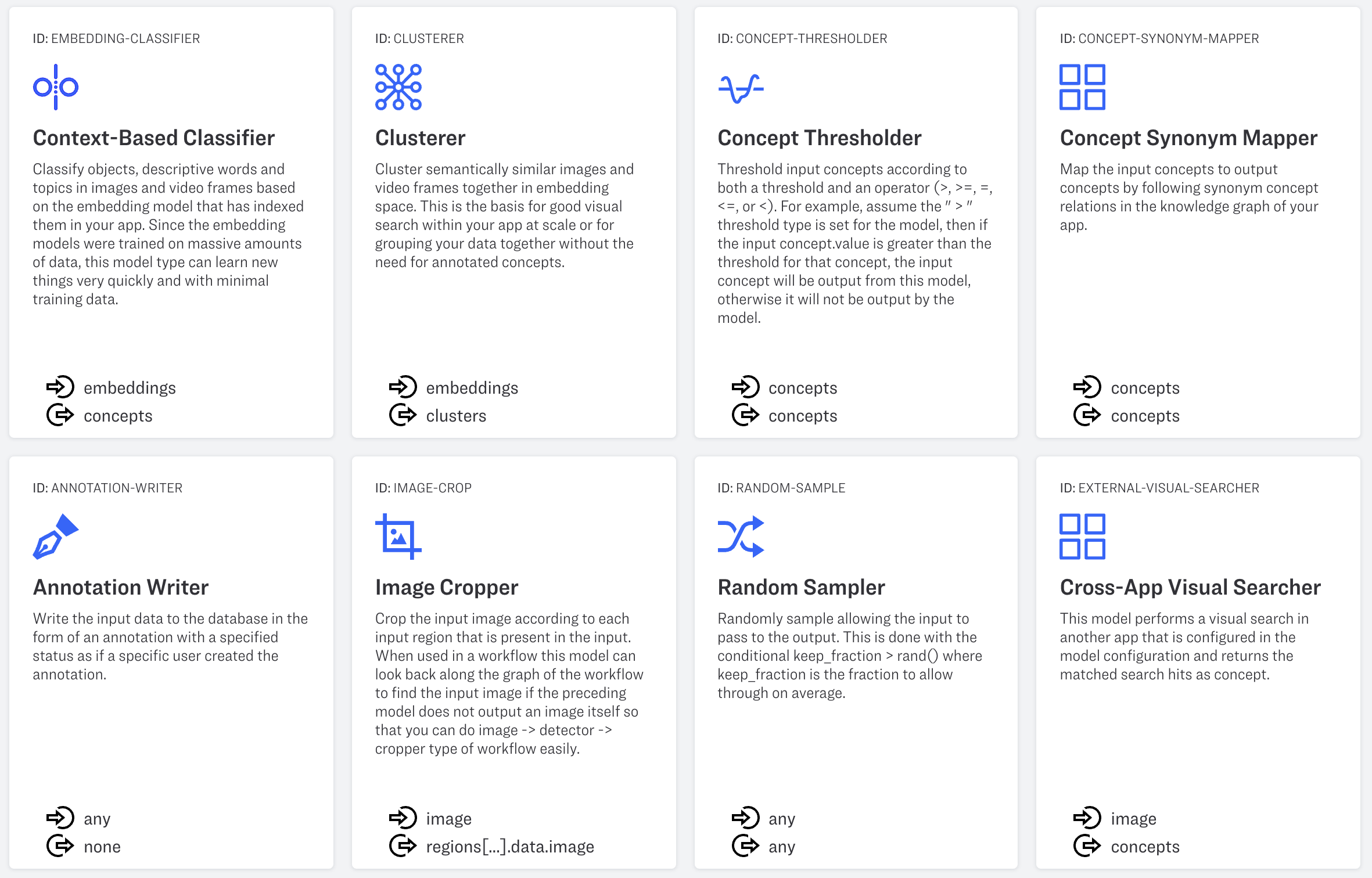

- Model operators: Create workflow graph nodes including nodes for concept thresholding, concept synonym mapping, annotation writing, image cropping, random sampling and cross-app searching.

Creating Workflows

Workflows allow you to assemble your models in a graph, connecting the outputs from one model to the inputs of another model. You can combine individual models in order to build more targeted solutions. Take a look at a couple of basic design patterns that demonstrate how this works.

Stacks

With this example of a stacked model, you can send images or videos as inputs into your application. A detector model can then draw the bounding box regions around different objects. These objects can then be cropped out of the image and sent as inputs into a classification model.

This kind of a workflow is useful any time you want to zoom in on specific objects in an image and classify them. For example, you might create a "face mask detector" that first detects individual faces, then crops the image, and then classifies the final result as an individual having a face mask or not having a face mask.

Branching

With a branching model, you begin by sending images, video or text as inputs into your application. From here your inputs can be processed by a classification model, and a number of concepts are returned with their corresponding prediction confidence scores. If predictions are made with confidence above a certain threshold, they are then sent to an annotation writer with a "success" status - automatically writing an annotation for that concept on that input. If the concepts are predicted below a certain threshold, the annotation is written in "pending review" status, awaiting review by a person.

This branching workflow can be extremely helpful when labeling data and is key to enabling more sophisticated "active learning" workflows.

With this release, Clarifai makes it easier than ever to integrate AI into your production workflows. To learn more contact us or signup.