NVIDIA Nemotron™ 3 Nano Omni now available on Clarifai

NVIDIA Nemotron 3 Nano Omni is an open multimodal model with highest efficiency that powers sub-agents to complete tasks faster across vision, audio, and language.

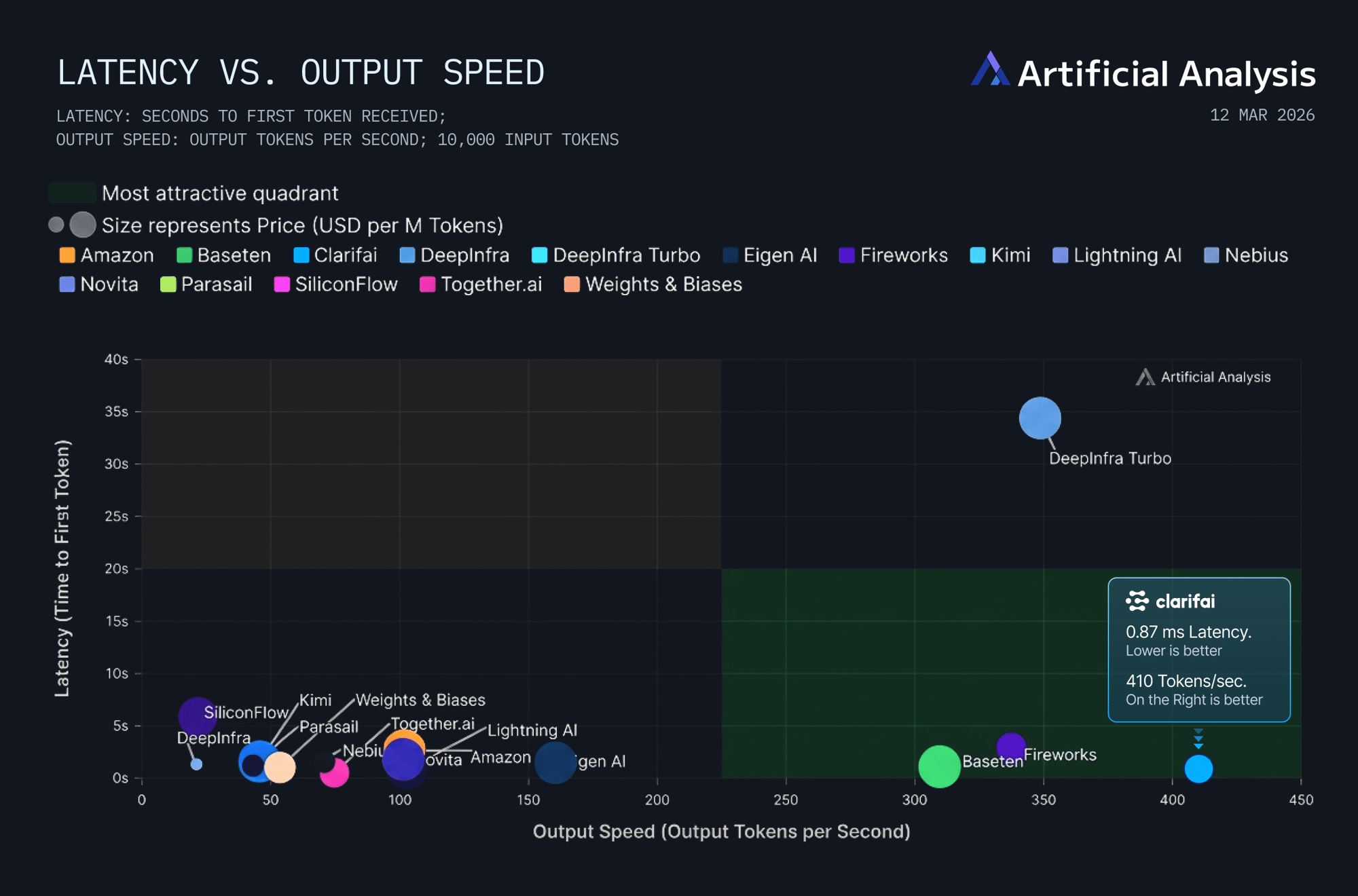

Day-0 Support at 400 Tokens Per Second on Clarifai Reasoning Engine.

Nemotron 3 Nano Omni

From computer-use agents navigating GUIs to complex document intelligence and real-time audio-video reasoning, Nemotron 3 Nano Omni handles everything your agents need to see and hear.

By collapsing vision, audio, and language into a single reasoning loop, it eliminates the latency of multi-model stacks. Verified by Clarifai’s industry-leading infrastructure, it helps you build smarter, "always-on" AI tools faster and for much less cost.

Industry-Leading Throughput

Clarifai Reasoning Engine delivers Nemotron 3 Nano Omni at 400 tokens per second. Leveraging our optimized model delivery stack, your subagents can achieve unprecedented speed on multimodel inference with the same cost.

Unified Multimodal Intelligence

Nemotron 3 Nano Omni features a specialized Hybrid Transformer-Mamba MoE architecture. It functions as a multimodal perception sub-agent, maintaining a converged context across 256K tokens to interpret screens, documents, and video without fragmenting context.

Advanced Video & Audio Efficiency

Built with 3D convolution layers and Efficient Video Sampling (EVS), the model delivers ~2.5× lower compute for video reasoning, making it the most cost-effective solution for continuous monitoring and research workflows.

Run faster and cheaper with Clarifai

The full power of Nemotron 3 Nano Omni is realized when it's deployed on a platform engineered for absolute efficiency. Clarifai's Compute Orchestration is the market leader for deploying this 30B-A3B architecture at scale.

Maximized Output Speed

Our optimized infrastructure pushes the model past its baseline, delivering the high-frequency execution loops required for enterprise agents. This makes Clarifai the fastest provider for Nemotron 3 Nano Omni, significantly outperforming hyperscalers in raw throughput.

Enterprise-Grade Cost Efficiency

By replacing fragmented vision and speech stacks with a single unified model, we reduce inference hops and orchestration logic. Clarifai delivers elite multimodal performance at a fraction of the cost of frontier proprietary models, significantly reducing your Total Cost of Ownership (TCO).

Ultra-Low Latency

With a massive 300K context length, we provide the low latency essential for real-time reasoning and long-running agent workflows where maintaining "eyes and ears" on the task matters most.