AI infrastructure for modern cybersecurity systems

Threat detection, anomaly analysis, and incident response don't run on batch jobs. They run in real time, on sensitive data, inside environments that can't be exposed. Clarifai gives cybersecurity teams the inference infrastructure to deploy AI that's fast enough to matter and secure enough to trust.

Built for security teams that need production AI — not another cloud dependency.

Robotics Infra Problem

Why Security AI Infrastructure Is Uniquely Hard

You can't send security data to the public cloud

Threat telemetry, network logs, and endpoint data are among the most sensitive assets an organization holds. Running inference on shared cloud infrastructure isn't just a compliance risk — it's a security one.

Detection only works if it's fast enough

A model that takes seconds to return a verdict on a suspicious event is a model that arrives after the damage is done. Security AI needs inference speeds that match the pace of real-world attacks.

Models trained internally can't get deployed externally

Security organizations invest heavily in proprietary detection models. Getting those models into production — and keeping them updated — usually requires infrastructure and DevOps resources that security teams don't have.

Infrastructure that Meets Security-Grade Requirements

Ai infrastructure shouldn't be fragile, fragmented, or overpriced. Clarifai delivers scale, reliability, security, and efficiency—so enterprise teams can focus on results, not infrastructure.

No data leaves your perimeter

Air-gapped and on-prem deployments keep all inference fully inside your environment.

Detection at the speed of the threat

Sub-300ms inference for real-time classification and alert enrichment.

Proprietary models stay proprietary

Deploy custom detectors without exposing model weights or data to third-party infrastructure.

Full auditability by default

Every action logged, every access controlled, every workload traceable.

Key Capabilities

Unified Control

Manage GPU and inference resources from a single control plane. Eliminate tool sprawl with unified visibility across clusters, nodepools, and deployments—so teams spend less time managing infrastructure and more time shipping models.

Cross-Cloud Flexibility

Run workloads seamlessly across AWS, Azure, GCP, or on-prem hardware. Avoid cloud lock-in and dynamically route jobs to the most cost-effective or performant environment—all with consistent governance.

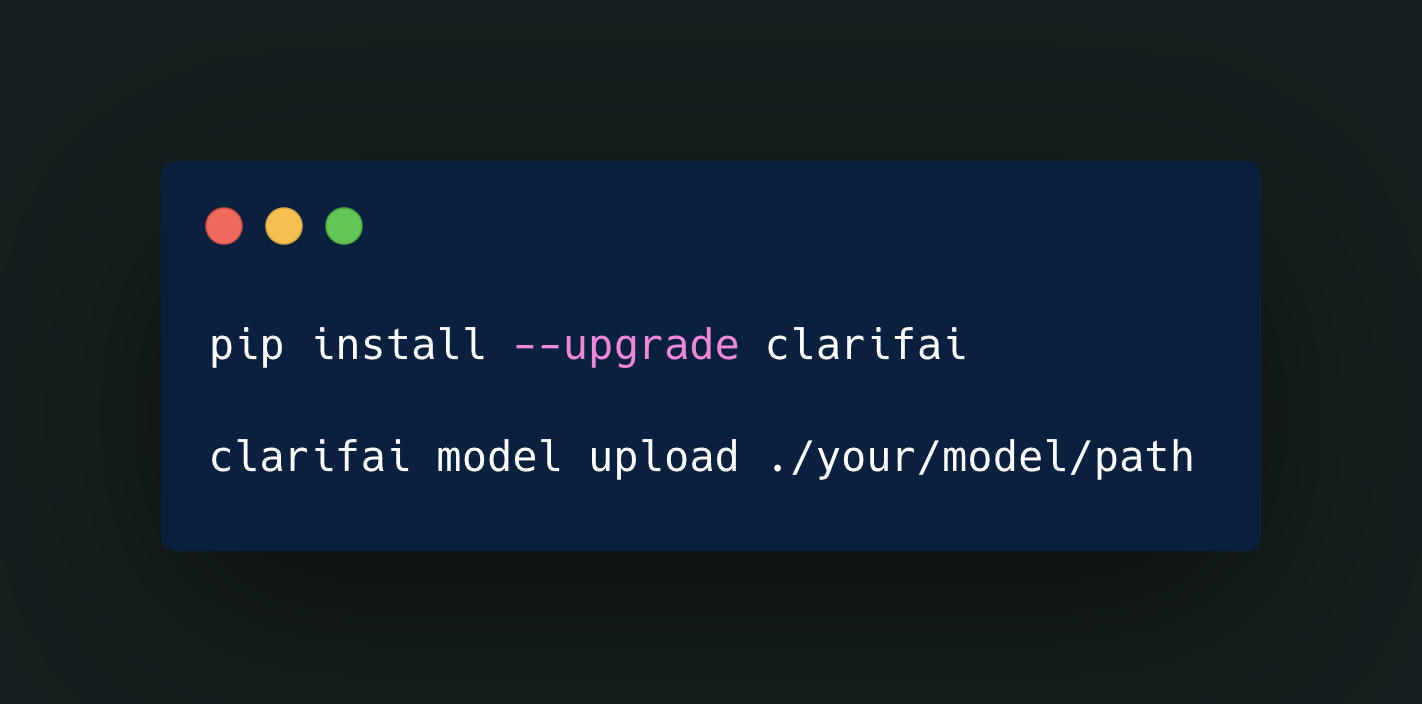

Custom Model Hosting

Upload and deploy proprietary models in secure Clarifai environments. Keep your IP protected while taking advantage of enterprise-grade infrastructure, monitoring, and scaling built specifically for GenAI workloads.

Agentic AI Ready

The Clarifai Reasoning Engine powers agentic and autonomous workflows with long-context optimization and streaming inference. Run complex reasoning chains faster while controlling cost per token.

Seamless Integrations Across the AI Stack

Clarifai connects with your existing ecosystem—PyTorch, TensorFlow, Hugging Face, AWS, Azure, GCP, and more. Bring your own model (BYOM) and frameworks while maintaining unified control, cost transparency, and observability across them all.

PERFORMANCE & PRICING

Optimized for Scale and Value.

Benchmark results for the GPT-OSS-120B model show Clarifai delivering industry-leading throughput and cost efficiency, placing it in the most attractive performance quadrant.

%20.png?width=1200&height=629&name=Output%20Speed%20vs%20Price%20(8%20Oct%2025)%20.png)