Blog

Nebius welcomes Clarifai’s core team and licenses inference IP to strengthen Nebius Token Factory.

Before we get started though, let’s first go over two basic definitions that will help you as you learn about each technique.

Classification refers to a type of labeling where an image/video is assigned certain concepts, with the goal of answering the question, “What is in this image/video?”

An image can be classified into a number of categories. For example, the below screenshot, taken from one of our model demos, shows an image that has been uploaded to Clarifai’s General Model.

As we can see, the model has given us a list of predicted concepts. These represent how the model has classified the image with each concept representing a different “classification.”

Object detection is a computer vision technique that deals with distinguishing between objects in an image or video. While it is related to classification, it is more specific in what it identifies, applying classification to distinct objects in an image/video and using bounding boxes to tells us where each object is in an image/video. Face detection is one form of object detection.

This technique is useful if you need to identify particular objects in a scene, like the cars parked on a street, versus the whole image.

Below, we can see the difference between classification and object detection:

As we can see, while figure 1 tells us all the things it sees in the image, in figure 2, only the faces are detected and isolated with a bounding box.

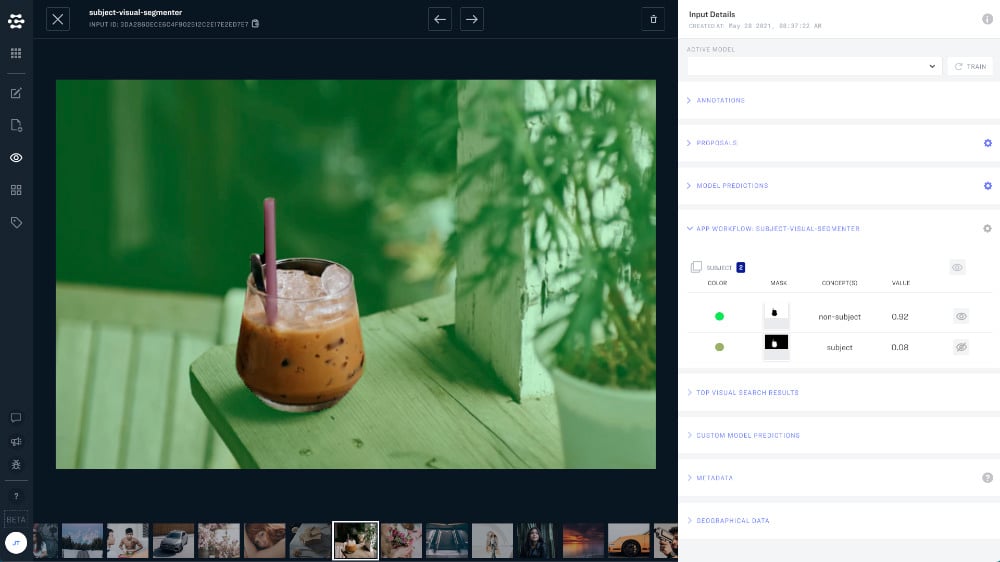

Segmentation is a type of labeling where each pixel in an image is labeled with given concepts. Here, whole images are divided into pixel groupings which can then be labeled and classified, with the goal of simplifying an image or changing how an image is presented to the model, to make it easier to analyze.

Segmentation models provide the exact outline of the object within an image. That is, pixel by pixel details are provided for a given object, as opposed to Classification models, where the model identifies what is in an image, and Detection models, which places a bounding box around specific objects.

It is related to both image classification and object detection, as both these techniques must take place before segmentation can begin. After the object in question is isolated with a bounding box, you can then go in and do a pixel-by-pixel outline of that object in the image.

Segmentation is particularly useful if you need to ignore the background of an image, like if you want a model to identify and tag a shirt in a fashion editorial image taken on a busy street.

While human beings have always been able to do all the above in the blink of an eye, it’s taken many years of research, trial, and error to allow computers to emulate us. Nevertheless, today, thanks to computer vision, our devices are finally catching up to our needs.

© 2026 Clarifai, Inc. | All rights reserved

Terms of Service Content TakedownPrivacy Policy